What is Beetle?

Beetle is a ruby gem built on top of the bunny and amqp Ruby client libraries for AMQP. It can be used to build a messaging system with the following features:

- High Availability (by using N message brokers)

- Redundancy (by replicating queues on all brokers)

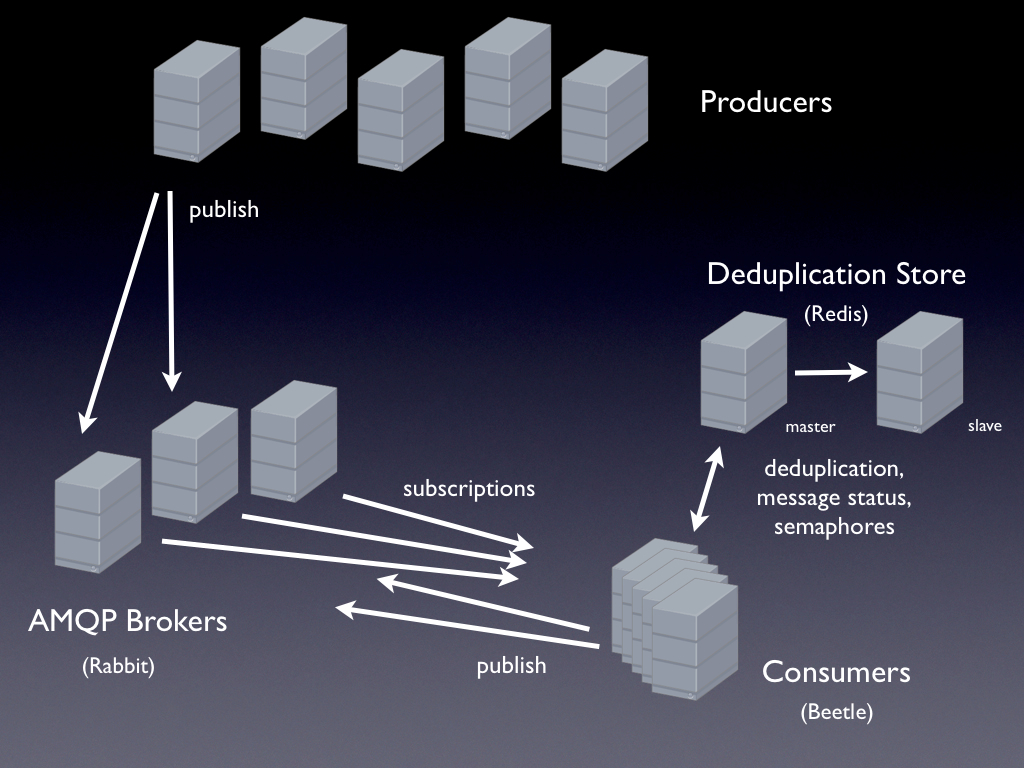

When publishing messages, the producer can decide whether a message is published redundantly or just sent to one of the message brokers in a round robin fashion. When publishing redundantly, the message is sent to two brokers, with a unique message id embedded in the message. The subscriber logic of beetle handles discarding duplicates before invoking application code. This is achieved by storing information about the status of message processing in a so called deduplication store.

Project Status

We have completely migrated Xing’s existing (non redundant) messaging solution to use Beetle instead. The new system has been up and running since end of March 2010 without any problems. We’ve since used it to successfully parallelize and speed up a number background tasks (e.g. SOLR indexing), to offload work from our web applications and change our architecture to be more event driven in general.

The first version of beetle was released April 2010. Since then, we’ve been working part time on automating the failover process for the deduplication store, which is now available as a deployment option.

Architectural Overview

A typical configuration of a messaging system built with beetle looks like this:

Key System Properties

Given a system with N message brokers and a master/slave redis pair for the message deduplication store:

- N-2 message brokers can crash or be taken down without losing redundantly queued messages while still maintaining the possibility of redundant publishing (upgrading/maintenance becomes a snap)

- N-1 messages servers can crash or be taken down without losing the ability to process messages (as long as the last server doesn’t crash, of course)

- If the deduplication store master crashes, the consumers will wait for it to come back online (or for the slave to be promoted to a master by the redis failover system)

- If a consumer dies during message processing (e.g. due to a OOM kill), the message will be reprocessed later (if the consumer has been configured for retrying failed message handlers)

- If the redis master dies, the beetle redis failover system will promote the slave to master role (and force the old master to become a slave when it comes back online)

Where to go next

- read our first blog post

- read about the redis failover

- read the FAQ page

- check out the examples

- read about the API

- clone the repository and run the examples to see that it actually works

- build your own messaging system

- contribute!